Role of perceptual uncertainty in reward-driven learning

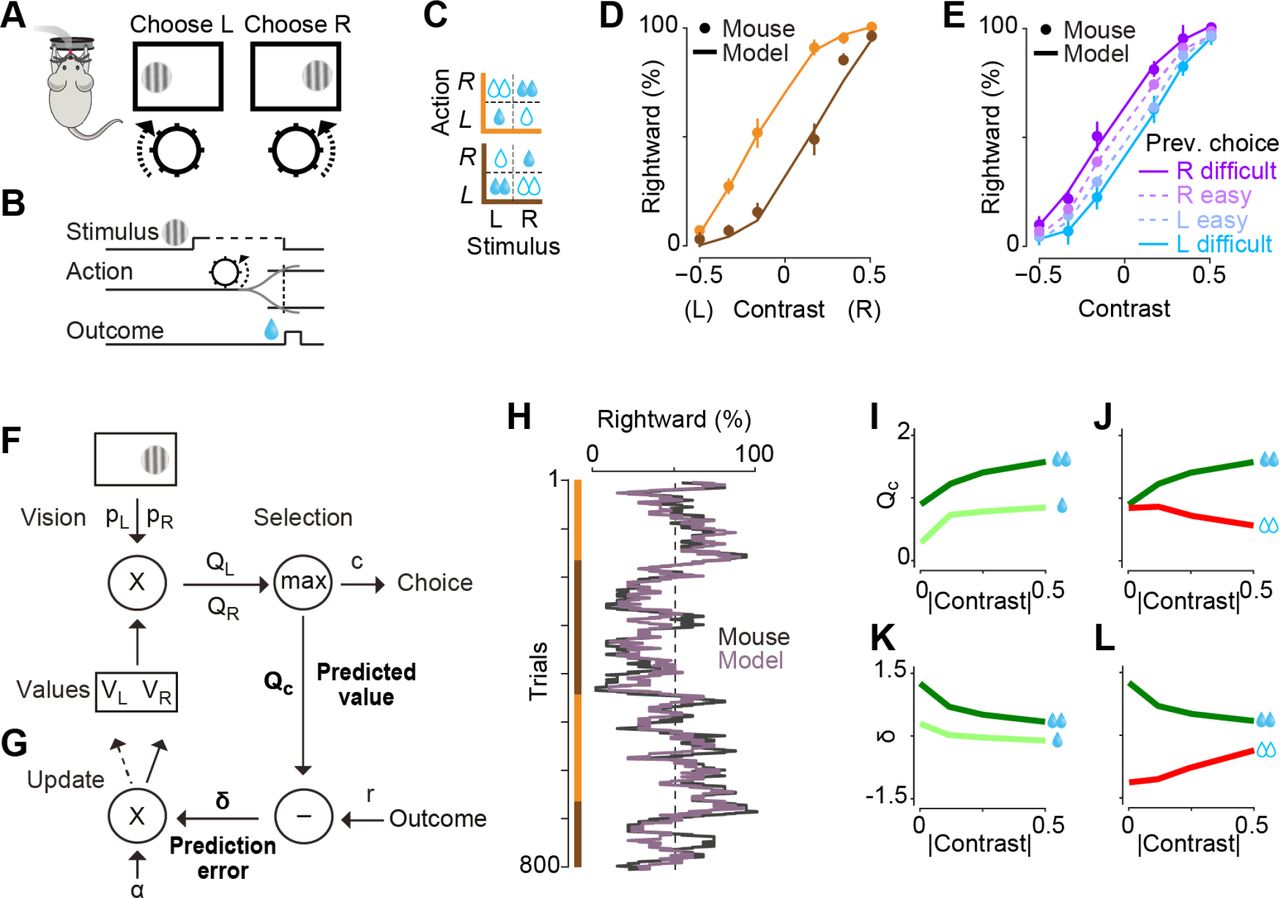

(Figure reproduced from Lak et al, Neuron 2020: Behavioral and computational signatures of decisions guided by reward value and sensory confidence)

(Figure reproduced from Lak et al, Neuron 2020: Behavioral and computational signatures of decisions guided by reward value and sensory confidence)

In a standard reinforcement-learning setting, expected returns are compared against true returns to modulate our learnt values and action policy. The “expected returns” or predictions are based on knowledge - or more accurately, our inference - of our current state, and the actions we have recently taken (“Q-value tables”). However, uncertainty about our state should be reflected as uncertainty of reward prediction error, and thus the amount of learning at that time. This state uncertainty often stems from perceptual uncertainty i.e. noisy or incomplete sensory information that we use for state inference. Lak et al studied learning of a perceptual decision making task in mice, where mice had to choose appropriate motor actions based on noisy visual cues in order to get water rewards. We modelled the improvement in task performance as a reinforcement-learning process, where we modelled value as a combination of sensory confidence and reward. Fitting these models to the behavioural data offered a parsimonious explanation of the animals’ sequence of choices and patterns of errors.